Venture Bytes #129: AI Productivity Gains Following Familiar Path

AI Productivity Gains Following Familiar Path

"The gains are not apparent until you zoom out."

The Nobel Prize-winning economist Robert Solow said in 1987, “You can see the computer age everywhere but in the productivity statistics.” The same can be said today about artificial intelligence (AI). AI systems are spreading across coding, research, customer service, marketing, and finance, but aggregate productivity growth has not yet surged. In a recent survey of 6,000 CEOs by National Bureau of Economic Research, 89% stated that AI is yet to move the needle on their bottom line. This widespread frustration is a normal feature of technological revolutions, which deliver miracles only after firms complete the long, messy work of organizational redesign.

Economic history identifies three distinct waves that every general-purpose technology must traverse before it changes the aggregate data. The first wave is the invention phase, where core capabilities like the steam engine, electricity, or large language models are perfected. The second is investment, where infrastructure is built and firms begin adopting the technology. The third is organizational change, where firms redesign processes around the technology rather than simply grafting it onto old ones. Productivity acceleration arrives only after those earlier stages mature. By most indicators, AI today sits somewhere between the second and third wave.

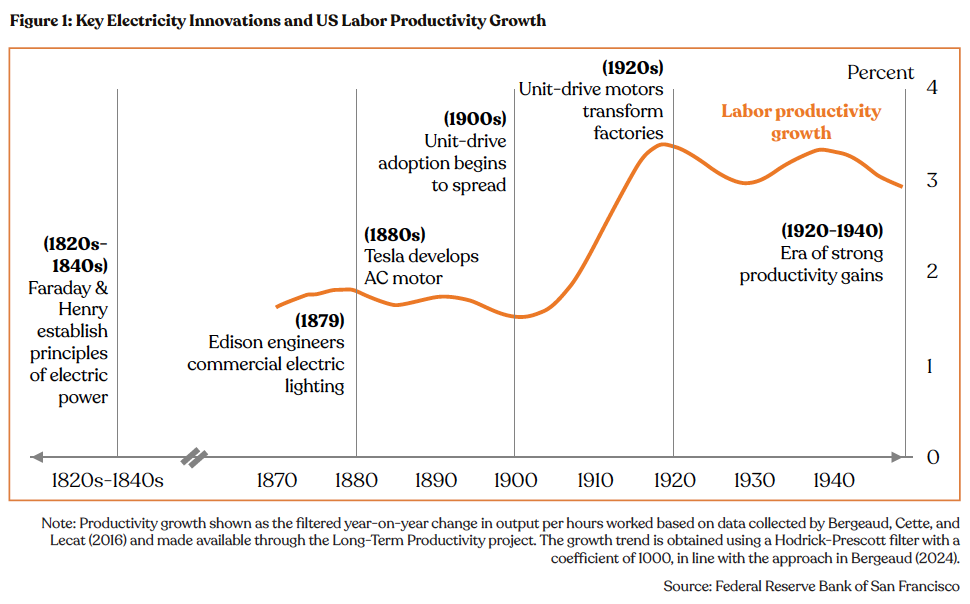

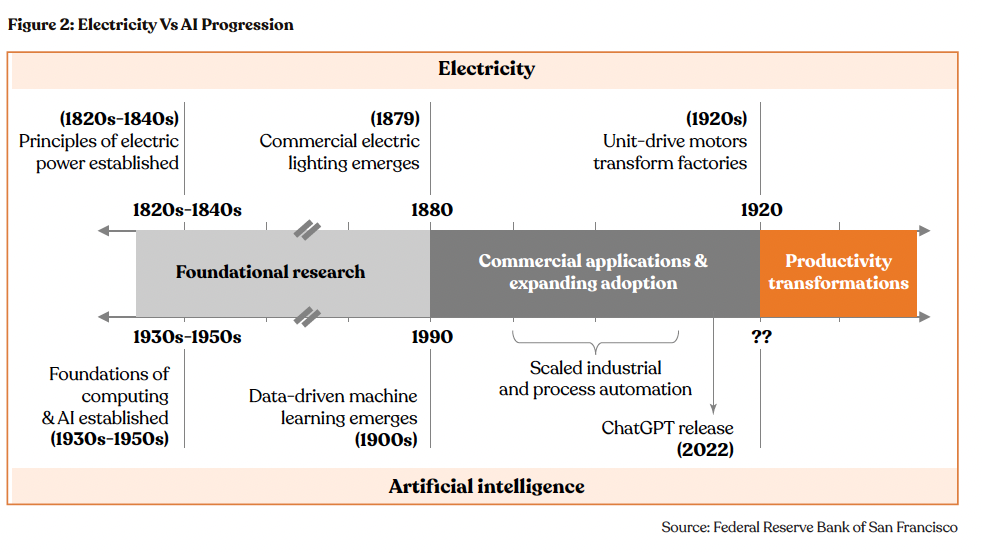

Mary Daly, President of the Federal Reserve Bank of San Francisco, made this case in a February 2026 economic letter. Electrification took nearly 100 years from Michael Faraday's discovery of fundamental principles of electricity generation in the 1820s and early 1830s to generating sustained productivity growth in the US. The light bulb, the electric motor, the grid, the transmission lines, each was a precondition for the next. And even that wasn't enough. The very essence of work had to change. Production had to be redesigned, factory floors reengineered, and workforces retrained. Firms had to shed the constraints of a steam-powered mental model before they could build for electricity.

AI's timeline is structurally similar but a little compressed. AI’s origins trace back to the 1940s and 50s. Applied machine learning and robotic process automation demonstrated narrow value for decades but never catalyzed broad productivity gains. Then, in 2022, roughly 70 years from AI's conceptual origins, ChatGPT put a natural language interface on top of vast computational capability and made it available to anyone. That's the equivalent of the lightbulb moment. We are now laying the grid.

The Mechanics of theProductivity J-Curve

One reason productivity gains take time to appear is what economists call the productivity J-curve. MIT economists Erik Brynjolfsson, Daniel Rock, and Chad Syverson showed that in the early stages of adopting a general-purpose technology, firms spend heavily on intangible investments: proprietary datasets, new workflows, worker training, and redesigned business processes. National statistics treat most of these as costs rather than productive assets. As a result, measured productivity can appear flat or even fall precisely when firms are building the foundation for future gains.

The numbers bear this out. Brynjolfsson and his colleagues estimated that if national accounts had fully captured software-related intangible investments, US total factor productivity would have appeared 15.9% higher than official statistics suggested by the end of 2017. AI is almost certainly creating a similar effect today. Firms are accumulating large stocks of intangible capital in data infrastructure, AI workflows, and trained employees, but most of it remains invisible in the aggregate statistics. The J-curve pattern is not a flaw in the technology. It is a feature of how transformative technologies always enter the economy.

The early evidence suggests the curve may already be bending. Brynjolfsson estimated that US productivity jumped roughly 2.7% in 2025, nearly double the 1.4% annual average of the prior decade. Brynjolfsson reads this as the economy transitioning out of the investment phase into a harvest phase, where years of organizational preparation begin to manifest as measurable output. Several more periods of consistent data are needed to confirm a structural trend, but the early signal is hard to dismiss.

Micro-Evidence vs.Macro-Data

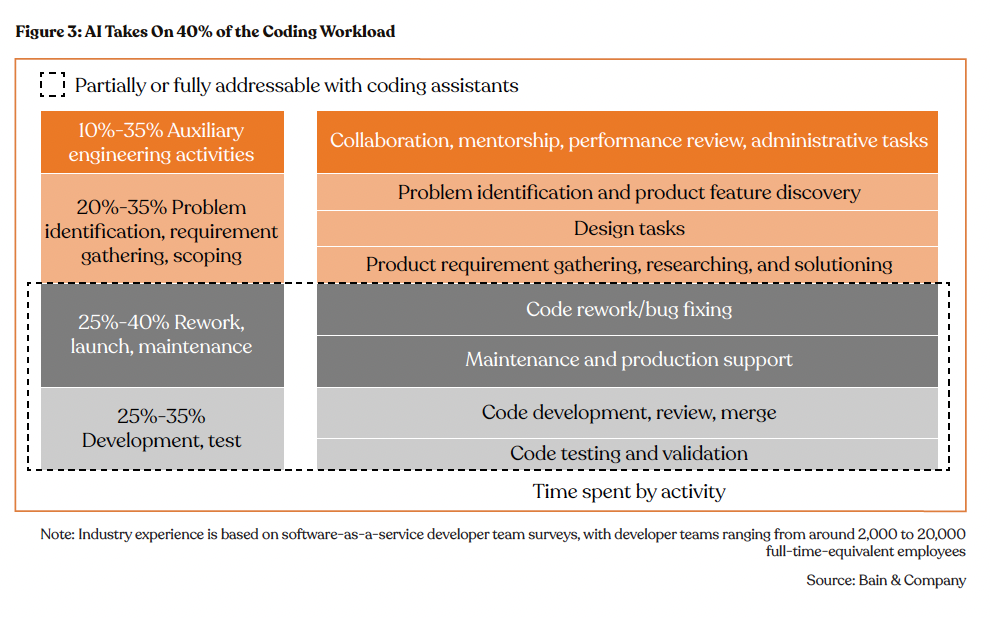

While aggregate data remains flat, task-level evidence already shows meaningful gains. A study of 5,172 customer support agents using a generative AI assistant found a 15% average productivity increase, with agents in the bottom skill quintile seeing a 36% boost. A Bain and Company report on software development found that teams using AI assistants report 10% to 15% productivity gains, with some firms achieving 25% to 30% boosts when they pair AI with full process transformation rather than incremental adoption.

The lag between these task-level gains and macro-data reflects a structural bottleneck. Bain noted that developers spend only 20% to 30% of their time on coding. Even a substantial speed-up in coding translates to modest overall gains if code review, testing, and deployment remain unchanged. This is similar to the example from Mary Daly about factory electrification. Replacing the steam motor with an electric one is progress, but the factory floor still constrains the output. The firms achieving outsized gains are the ones that extended AI across the entire workflow, not just the most visible task.

The Shift to Agentic Organizations

The next stage of the AI revolution, expected between 2026 and 2028, will likely be defined by agentic workflows. Unlike early chatbots that respond to a prompt, autonomous AI agents are designed to perceive, reason, and act across multiple software systems to complete an entire goal. Research from IDC suggests that by 2027, agent use at top 2,000 public companies in the world will increase 10x, with token and API call loads rising 1,000x.

Agentic AI has the potential to solve the orchestration problem that currently hampers productivity. It allows firms to strip away layers of coordination, redirect human talent toward strategic decisions, and treat AI as the core architect of the operating model rather than a tool bolted onto the edge of it. JPMorgan Chase is already deploying agents that autonomously detect fraud patterns across millions of transactions, replacing static rule-based systems. This transition is precisely the organizational redesign phase that every general-purpose technology requires before it shows up in aggregate productivity data.

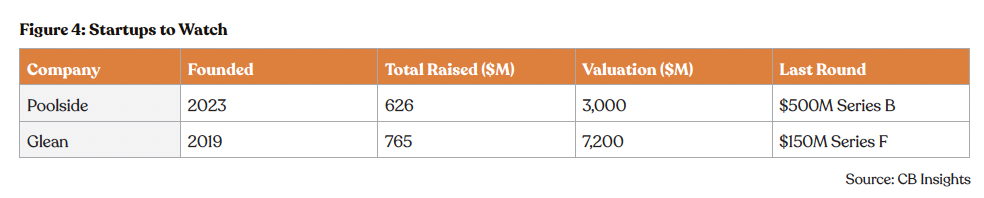

The companies that will capture disproportionate value from AI are not the ones using it as a shortcut inside existing processes but the ones redesigning the process entirely. Founded in 2023, Poolside is trying to bring artificial general intelligence into software development. The company is training a foundation model built specifically for software engineering rather than adapting a general-purpose LLM to code. California-based Glean, valued at $7.2 billion, sits at the intersection of the reorganization problem and the shift to agentic workflows. Glean has built an agentic enterprise search layer that not only retrieves information but proactively surfaces it during workflows. As firms move from AI pilots to full process redesign, Glean becomes the connective tissue that makes that redesign possible.

The productivity paradox of the early 2020s will likely transform into the productivity boom of the 2030s as firms work through the organizational adjustment that every general-purpose technology demands. The emergence of agentic AI, the accumulation of intangible investment in data and software, and the early harvest-phase signals in the 2025 productivity data all point in the same direction.

Solid-State Transformers Add Intelligence Quotient to the Grids

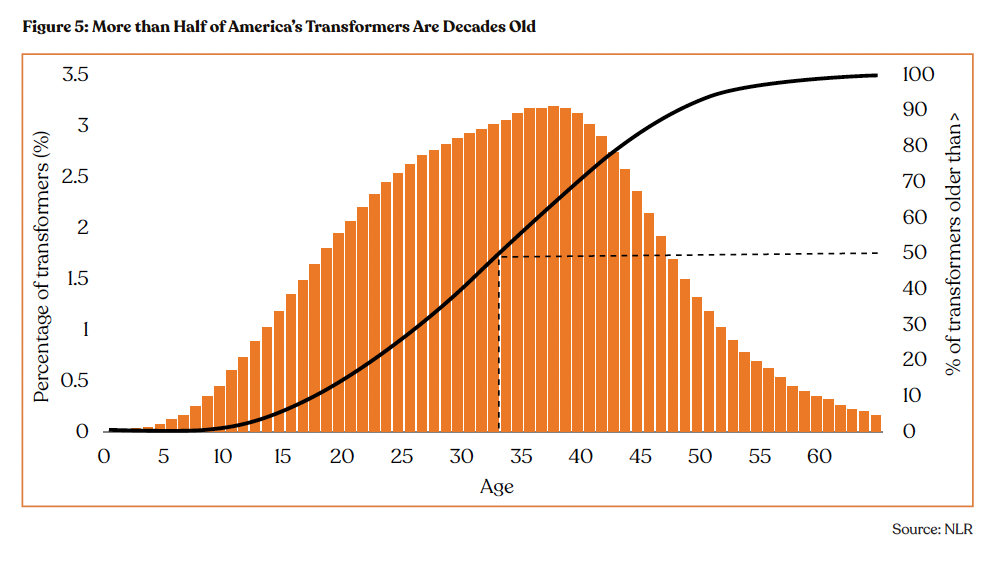

The US grid hosts roughly 80 million transformers, and over half of them are more than 30 years old, according to data from National Laboratory of the Rockies (NLR). The core hardware hasn't meaningfully changed in half a century, and for most of that time, it didn't need to. Power flowed one way from large generators to passive loads. The traditional transformers were made to be reliable, not intelligent. Their job is no longer simple now. Solid-state transformers (SSTs) are increasingly positioned as the solution, enabling the kind of dynamic, high-density power management that modern loads demand.

NLR estimates 60–80 million distribution transformers, with between 2.5 and 3.5 TVA of capacity, and that ~55% of in-service units are more than 33 years old and approaching end of life.

A conventional transformer takes high-voltage electricity and steps it down to usable levels. It does this passively, through a magnetic core, with no ability to sense, adapt, or respond. That was fine when power flowed in one direction i.e. from large generators to passive loads. Today's grid is different. Solar panels feed power back into distribution lines. EV chargers create sudden load spikes. Batteries charge and discharge based on price signals. The old transformer has no idea any of this is happening.

The physics compound the problem at the rack level. High-performance GPUs now require up to 700W each, with Blackwell GPUs rated at 1,000W or more. The power consumed by just one rack of servers is rising to 90-120 kW, up from 15-30 kW just a few years ago. About 12% of electricity is wasted before it even enters the GPU, creating 15 kW of heat per rack that must be dissipated. Each conversion stage adds loss, heat, and failure risk.

Unlike conventional transformers, which move electricity through magnetic cores, SSTs use semiconductor switches controlled by software to regulate voltage and power flow in real time. This makes them programmable, responsive, and capable of being coordinated as nodes in a managed network.

The concept of SSTs is not new. GE's William McMurray described an electronic transformer in 1968, and research prototypes have existed for decades. The obstacle was always materials. Silicon semiconductors couldn't efficiently handle both high voltage and high switching speeds at manufacturable cost. Silicon carbide and gallium nitride resolved that. As SiC wafer production has scaled globally, device costs have come down enough to make commercial deployment viable.

The SiC cost curve has moved decisively in SSTs' favor. The price declined from around $700 in early 2024 to around $500 as of mid-2024. In 2025, 6-inch SiC substrate prices hovered around $400. In 2024 alone, 14 new 8-inch SiC plants were under construction globally. For SST manufacturers, this is the equivalent of the solar panel cost curve.

Yet for all their promise, SSTs are unlikely to replace large substation transformers, at least not yet. SSTs still carry a significant capital cost premium over conventional units. Additionally, they require new protection frameworks that utilities have not yet developed.

The immediate demand is likely to come from data centers, EV charging hubs, and renewable power generation, where SSTs replace not just transformers but several pieces of adjacent equipment simultaneously.

In a conventional data center power chain, incoming medium-voltage power passes through a transformer, then an inverter, then an uninterruptible power supply system, then a distribution unit. All these separate hardware stacks have their own footprint, cooling requirement, and failure mode. An SST converts medium-voltage AC to the DC voltages that servers run on, handles power quality management, and integrates behind-the-meter renewable connections directly. When coupled with grid-scale batteries, it also eliminates the need for a dedicated UPS system entirely, freeing the floor space for more compute racks.

The same consolidation applies at EV charging hubs. A high-power DC fast charger today requires separate equipment for voltage conversion, power quality management, and grid-storage interfacing. SSTs handle all three natively.

The demand from renewables will be driven by the urgent need to manage bidirectional flows from solar and wind installations. For most of the grid's history, electricity was generated centrally and consumed locally. With renewables, millions of distributed solar and wind sources create a grid that flows in every direction simultaneously. A passive transformer has no ability to sense or mediate that complexity.

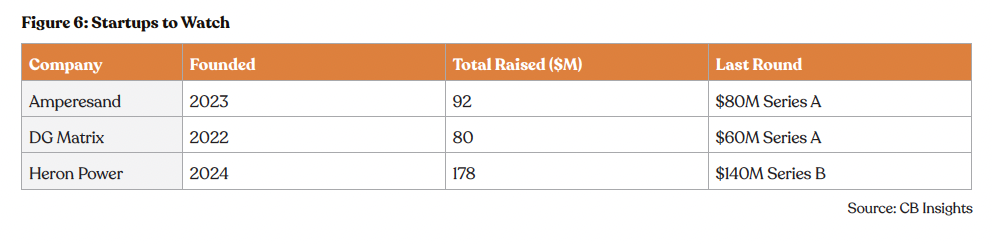

The commercial landscape of SSTs is still taking shape, but some well-capitalized startups have made commendable progress, each placing bets on a different segment of the opportunity.

Amperesand is targeting hyperscale AI data centers and defense infrastructure. Spun out of Nanyang Technological University in 2023, the company's third-generation SST platform is built on silicon carbide and delivers MV AC to LV DC conversion efficiency above 98.5%. Its system reduces installation labor requirements by 50%, accelerates time-to-power by a factor of ten, and achieves over 80% reduction in electrical footprint compared to conventional configurations, directly addressing the floor space economics that hyperscale operators are acutely sensitive to. The company plans first commercial deployments in Singapore later in 2026.

Backed by strategic investors ABB, Chevron Technology Ventures, and Clean Energy Ventures, DG Matrix is another promising startup. The company's core differentiator is its patented Interport technology, which is a power routing architecture that can simultaneously accept and distribute power from multiple sources to multiple loads of differing voltages.

Founded by Drew Baglino, who led Tesla's powertrain and energy engineering for over a decade, Heron Power is targeting the medium-voltage segment in data centers, solar farms, and grid-scale battery installations. Its Heron Link transformer units are designed to give server racks 30 seconds of bridge power while backup sources come online, occupy 70% less space than the equipment they replace, and at a solar farm can perform the functions of both an inverter and a transformer at the same combined cost. The company is building a U.S. factory targeting 40 GW of annual production capacity, with full-scale output targeted for H2 2027.

The transformer is infrastructure's most invisible component, critical to everything but unchanged for half a century. What SSTs offer is not just a hardware upgrade, but a distributed network of programmable, software-controlled power nodes capable of responding to the complexity that renewables, EVs, and AI compute have introduced into the system.

What’s a Rich Text element?

Heading 3

Heading 4

Heading 5

The rich text element allows you to create and format headings, paragraphs, blockquotes, images, and video all in one place instead of having to add and format them individually. Just double-click and easily create content.

Static and dynamic content editing

A rich text element can be used with static or dynamic content. For static content, just drop it into any page and begin editing. For dynamic content, add a rich text field to any collection and then connect a rich text element to that field in the settings panel. Voila!

How to customize formatting for each rich text

Headings, paragraphs, blockquotes, figures, images, and figure captions can all be styled after a class is added to the rich text element using the "When inside of" nested selector system.